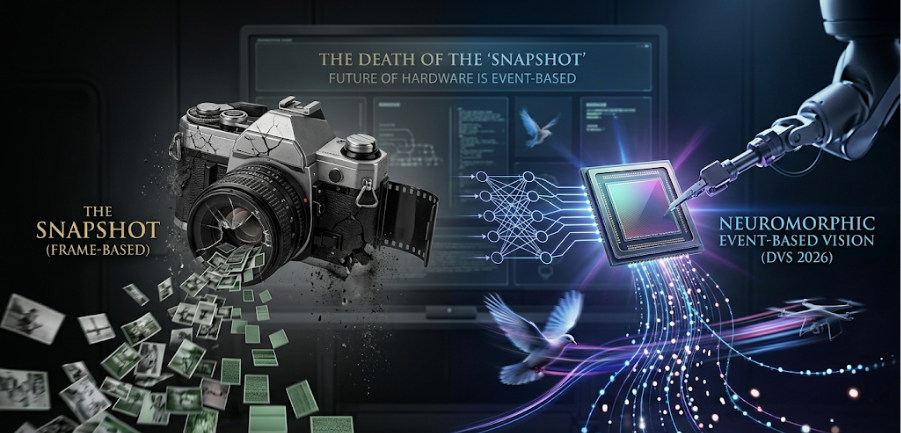

For nearly a century, we have forced machines to see the world through a series of static snapshots. Whether it’s a cinema camera at 24 frames per second or a high-end industrial sensor at 240, the philosophy remains the same: Capture everything, process it later.

But in the era of AI and the “Edge,” this philosophy has become a massive energy liability.

The Redundancy Tax

Traditional sensors are incredibly wasteful. If a camera is pointed at a still landscape, it continues to capture millions of pixels of the exact same data, frame after frame. Your processor then burns energy “confirming” that nothing has changed.

In a world of trillions of IoT devices, we can no longer afford this “Redundancy Tax.” We don’t need more data; we need better data.

Enter Neuromorphic Sensing (Event-Based Vision)

Inspired by the human retina, Event-Based Sensors do not have a frame rate. Instead of capturing a whole image at set intervals, each individual pixel acts as an independent processor.

- The Rule: A pixel only reports data if it detects a change in light (an “event”).

- The Result: If nothing moves, no data is sent. If a bird flies across the sky, only the pixels tracking the bird’s path fire.

The Hardware Advantage

Moving from snapshots to events offers three game-changing benefits for hardware engineering:

- Micro-Watt Power Consumption: Because 90% of the sensor is “asleep” at any given time, power draw drops by orders of magnitude. We are moving from Watts to Milliwatts.

- Infinite “Frame Rate”: Since there is no shutter, there is no motion blur. Event-based sensors can track objects moving at supersonic speeds with a temporal resolution measured in microseconds.

- Privacy by Design: Because the sensor only captures “edges” and “motion” rather than high-resolution portraits, it is inherently more privacy-secure for smart home and medical applications.

The 2026 Landscape: From Drones to Humanoids

As we move into 2026, we are seeing this hardware integrated into the most demanding environments:

To make your LinkedIn article on Event-Based Vision more comprehensive and technical for 2026, here are several “advanced” points you can integrate. These cover the latest breakthroughs in background reconstruction, motion estimation, and the “Hybrid” systems that are dominating the market right now.

1. Seeing Through “Dynamic Occlusions”

Traditional cameras struggle in “dirty” environments—rain, snow, dust, or debris can ruin a frame.

- The Matter: In 2026, we are using event cameras to “see through” these occlusions. Because a raindrop moves differently than the background, the event sensor can isolate the background pixels in microseconds.

- The Benefit: This is a game-changer for Autonomous Vehicles (AVs) and Drones operating in harsh weather. While a traditional camera sees a “blurry mess,” the event sensor reconstructs a clear view of the road behind the rain.

2. The 5-Point “Eventail” Solver

For a robot to move, it needs to know its velocity. Traditionally, this takes massive compute power to compare two high-res frames.

- The Matter: A new mathematical approach called the 5-Point Minimal Solver uses “Eventails” (spatio-temporal manifolds).

- The Detail: Instead of comparing whole images, the hardware looks at just 5 points of light-change to calculate linear velocity with near-100% accuracy.

- The Benefit: It allows a drone to estimate its speed and position using 90% less compute than a traditional GPS/Visual-Odometry system.

3. The “Hybrid” DAVIS Architecture

We are not throwing away traditional cameras yet. The most successful hardware in 2026 uses DAVIS (Dynamic and Active-pixel Vision Sensor).

- The Matter: This is a single chip that contains both a traditional frame-based sensor and an event-based sensor sharing the same pixels.

- The Strategy: The “event” side stays on 24/7 (low power) to detect motion. Once it “wakes up” due to an event, it triggers the “frame” side to take a high-res photo for identification.

- The Benefit: This is the perfect solution for Smart Doorbells and Security Cameras—extending battery life from weeks to months.

4. High Dynamic Range (HDR) Without the Work

Traditional HDR requires taking 3+ photos at different exposures and merging them, which causes “ghosting” if something moves.

- The Matter: Event cameras have a natural dynamic range of 140dB (compared to 60-80dB for standard cameras).

- The Science: Since they measure logarithmic changes in light, they can see a person standing in a dark tunnel while simultaneously seeing the bright sunlight at the exit—without any “blown-out” white spots.

5. Neuromorphic Hardware Pairing (Intel Loihi 3 / Akida 2.0)

You cannot run an event-based sensor on a traditional CPU effectively—it’s like trying to put a jet engine in a car.

- The Matter: In 2026, these sensors are being paired with Neuromorphic Chips (like the Intel Loihi 3). These chips process “spikes” of data rather than continuous streams.

- The Stats: An event sensor + a Neuromorphic chip consumes roughly 1/1000th the power of a GPU doing the same task.

Comparison Summary: The Shift in Stats

| Feature | Snapshot (Frame-Based) | Event-Based (Neuromorphic) |

| Latency | 33ms (at 30fps) | 1 Microsecond |

| Data Rate | High (Redundant) | Ultra-Low (Sparse) |

| Dynamic Range | 60 dB | 140 dB |

| Power Draw | Watts | Milliwatts |

| Ideal For… | Cinema, Social Media | Robotics, Drones, AVs |

- Autonomous Drones: Navigating through dense forests at high speeds without the “lag” of traditional image processing.

- Wearable Tech: Glasses that can track eye movement for AR/VR using almost zero battery.

- Industrial IoT: Sensors that can detect the microscopic vibration of a failing motor weeks before a human—or a traditional camera—could ever see it.

Conclusion

The “Snapshot” era was a limitation of our past technology. The future belongs to hardware that doesn’t just record the world, but feels the change within it. To build truly intelligent machines, we must first stop drowning them in redundant data.