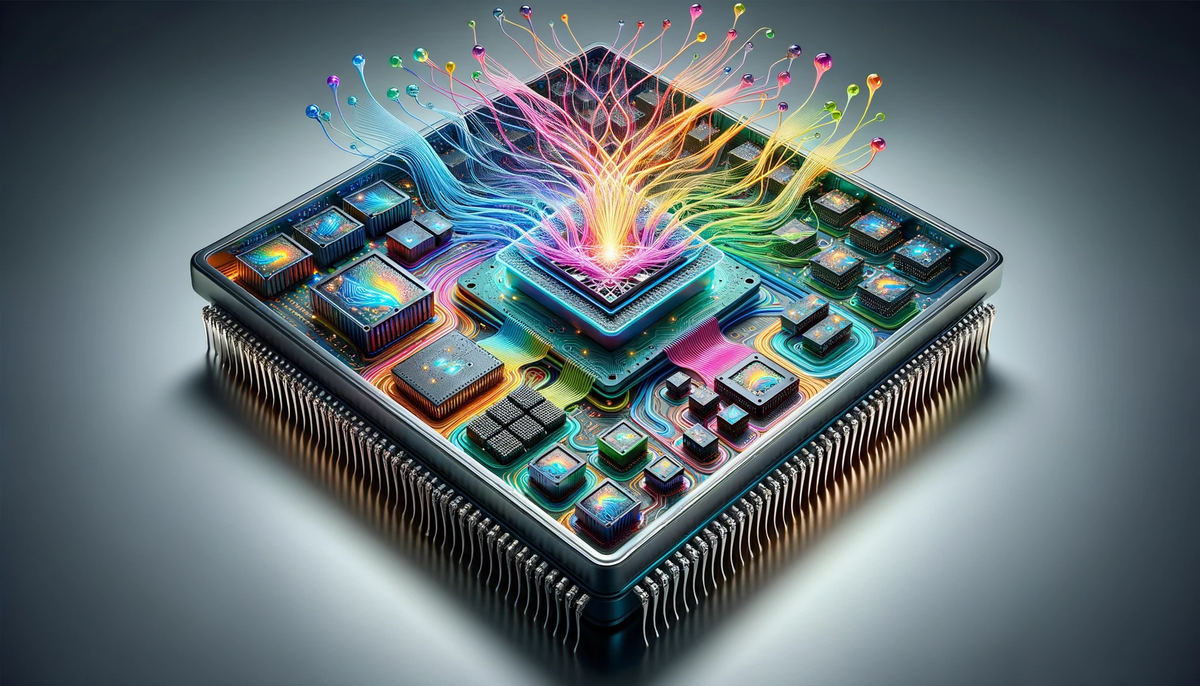

AI models are becoming larger, faster, and more demanding — but software alone cannot carry this evolution. The true performance boost begins deep inside the chip. Hardware optimization is now the backbone of modern AI, enabling models to train quicker, infer faster, and scale without breaking energy budgets.

Hashing Hardware focuses on engineering high-efficiency systems that support these next-generation workloads. By optimizing everything from compute cores to memory channels, we eliminate bottlenecks that slow AI down.

Why Hardware Matters More Than Ever

AI requires billions of calculations per second. Without specialized hardware, even simple inference tasks can become slow and expensive.

Key challenges include:

- Memory bandwidth limitations slowing model training

- Thermal throttling reducing speed under heat

- Inefficient compute layouts that waste power

- Latency issues in multi-node workloads

Optimized hardware solves these problems at the root, delivering more performance per watt, per cycle, and per dollar.

Where Optimization Happens

Hashing Hardware improves AI performance across four major areas:

1. Compute Architecture

Custom GPU/TPU configurations tuned for model size, batch processing, and parallelization.

2. Memory Hierarchy

High-throughput memory access with minimized latency — crucial for large language models.

3. Thermal & Power Engineering

Advanced cooling and airflow layouts to keep processors at peak performance.

4. Data Path Optimization

Low-latency switching, high-speed interconnects, and streamlined communication channels.